ChatGPT citations reward ranking and precision over length: Study

ChatGPT citations favor pages that rank well, match the query in their headings, and stay tightly focused, according to an AirOps study of 16,851 queries. The top retrieval result was cited 58% of the time, and pages that answered the main query more narrowly outperformed broader, more comprehensive guides.

Why we care. This study clarifies how to earn ChatGPT citations: win retrieval, mirror the query in your headings, and answer one question extremely well. In this study, that mattered more than breadth.

The findings. Retrieval rank was the strongest signal. Pages in the top search position were cited 58.4% of the time, versus 14.2% for pages in position 10.

- Heading relevance was the strongest on-page factor. Pages with the strongest heading-query match were cited 41.0% of the time, compared with roughly 30% for weaker matches.

- Focused pages also beat comprehensive ones. Pages that answered the main query more narrowly outperformed broader, more comprehensive guides, undercutting the usual “ultimate guide” approach.

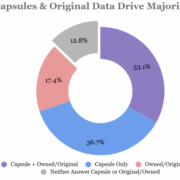

What drove ChatGPT citations. In this study, pages that won citations usually ranked well, used headings that closely matched the query, and stayed focused on answering it.

- Structure helped, but only slightly: Pages with JSON-LD markup posted a 38.5% citation rate versus 32.0% for pages without it, and articles with 4 to 10 subheadings performed best.

- Beyond a certain point, length hurt performance: Pages between 500 and 2,000 words performed best, but pages longer than 5,000 words were cited less often than pages under 500 words.

Freshness helps, up to a point. Pages published 30 to 89 days earlier performed best, while pages newer than 30 days performed worse. This suggests new content may need time to build retrieval signals.

- Pages more than 2 years old were cited less often, which suggests that content refreshes could help if you’re already ranking for the right queries.

About the data. AirOps said it scraped ChatGPT’s interface, not the API, and analyzed 50,553 responses generated from 16,851 unique queries run three times each. The dataset included 353,799 pages and more than 1.5 million fan-out detail rows across 10 verticals and four query types.

The study. The Fan-Out Effect: What Happens Between a Query and a Citation